Let’s Connect & Accelerate Your Organic Growth

- Your data is properly secured encrypted by SSL

It is not possible for websites to restrict all of their information to a single Web page. That is why sites use multiple pages, each dedicated to a certain piece or type of information. This also makes it easier for visitors to navigate the website and access the information they need. This kind of structured approach results in enhanced user experience, a better understanding of personas and the ability to streamline the buyer’s journey.

All of these things can be achieved through pagination. Pagination is key to streamlining the pages on a website so that visitors through search engines can access them easily. Presenting ordered results on search engines, directing users to pages that contain the information that they searched for, and making it easier for the users to navigate between multiple pages are all part of pagination. So, what is pagination and how can it help you in your search engine optimization (SEO) endeavours? Let us find out.

What is Pagination?

Pagination is a process whereby a huge data set is distributed into several sub-category pages. Pagination makes website navigation smooth and makes information relevant to their specific search query available in one go. This process is implemented by websites on search engines, to yield organic results for each page on a website.

Pagination also allows users to access information like the total number of pages in a website, the page they are currently on, the history i.e. previously opened pages, and much more. This adds to user awareness, making it easy for them to access multiple pages based on their user journey. For instance, quick control buttons on websites such as the previous page, next page, first page, and so on, can contribute to pagination and save a lot of time for the users. This guides them in their journey, as they would know which pages to visit next. This is a defining trait of a refined and rewarding user interface for websites.

How does pagination work?

Pagination in SEO works by splitting a single piece of content across a series of Web pages. It is usually done so that exhaustive chunks of content become easily digestible. It best serves websites that sell multiple products or those that deal with long lists of articles.

Pagination distributes content across multiple URLs. You have the option of scrolling to previous or subsequent URLs. If you use pagination to break down a single piece of content and present it on multiple pages, you must make an effort to notify search engines that the specific URL has a multi-page document. You must also make an effort to inform search engine bots about the order of the pages; that is, which ones appear first and which appear later.

Examples of Websites Using Pagination

1. Myntra

Myntra not only offers next page and previous page options to their website visitors while they are searching for something on the page, they also offer category pages with unique filters to ensure that users see the exact type of products they are looking for.

2. Search Engine Journal (SEJ)

SEJ offers users the option to explore any category based on their preference. The website offers a “More Resources” section on each of its blog pages with related content for users along with a “Suggested Articles” section. This gives the users access to tons of content from a single page, which they can explore based on their liking.

3. Slideshare

Slideshare is a platform that aggregates across a huge number of categories, and the best way to offer the users a seamless experience is to segregate their content with pagination. The site provides pagination to the users alphabetically or numerically.

4. Search engines

Google implements pagination in a very simple and accessible way for the users. The search result pages on Google present the users with alternate search terms at the end of the page if they do not find relevant results on the first page. This takes the user experience up a few notches and makes it easier for users to find the exact results they are looking for.

Why and When to Use Pagination?

-

Improved User Experience

The foremost reason to use pagination on a website is to enhance user experience. According to a recent survey, over 70% of the users spend their viewing time on a website for the first two folds of the content. This means that you need to hook the users to your website so they can explore more content within that time span. Pagination provides users with that hook. You can use pagination to offer relevant content to users such that they do not have to search too much, thus ensuring that they stay longer on your website.

-

Smooth Navigation

Pagination adds another layer of smooth navigation for the users on any page of a website, even when the page does not use any call to action (CTA) buttons. As users reach the end of a page, they are offered several items related to the content of that page or of the same category. This intuitive offering increases the reasons for users to stay longer on the website and explore content relevant to their liking. And with pagination, numbering is also involved which gives the users an exact idea of how many more pages they can explore. It also gives them a holistic idea of how large your website’s data set is with regard to their search query. For instance, users searching for “men’s sports shoes” on Myntra can gauge how many results to expect just by looking at the number of pages available at the end of a page. This gives them an idea of the variety of products available for their search term.

-

Google on Handling Pagination

Google announced in 2019 that they no longer apply rel=“next” and rel=“prev” for indexing Web pages. This means that websites can no longer use this link element to get their pages indexed with a simple pagination process. John Mueller of Google confirmed that pagination is no longer treated differently. Each page is viewed as a unique page and judged the way a normal page would be. So, what does this mean? Rather than being considered as a single piece of content, pagination containing a series of pages will treat each page as an individual page.

This update shone a light upon the new pagination best practices for SEO. SEO professionals now have to consider each page under a paginated set as a unique page, and implement the best SEO practice across each one of them in order to rank them. So, how exactly does pagination influence the SEO of your website? Let’s explore.

How Pagination Influences SEO

-

Affects website crawling

As pagination is implemented on your website, you need to decide which the most important pages are on your pillar page, which is where the pagination began. This is because the crawl budget for bots is limited, which means that in a huge data set, chances are that not all of it is going to be crawled or indexed.

So, by prioritizing the important pages, you can make sure that they show up on the search results. This will ensure that your website’s crawl budget is spent on your best pages. And as users visit those pages, they can interact with your website and other related pages through structured pagination. This is a neat way of implementing pagination in SEO for your website.

-

Results in creation of ‘thin and duplicate’ content

If different content types are spread across various pages and combined into a single article through pagination, search engine bots might rank those pages. This is because splitting content reduces its value, and search engines only rank valuable content. Search engine bots might consider the content on each page as duplicate or thin content. This can prove to be a negative SEO factor for your website. So make sure to keep only valuable content across each of your pages while carrying out the process of pagination.

-

Weakens ranking signals

The ranking signals sent to search engine algorithms might be weakened due to pagination. A good example to understand this is backlinks. Authoritative websites linking to your website is a good signal and boosts your website’s ranking. However, if your website has used pagination, the authority gained from these backlinks might be split across multiple pages, which can dilute the value of the backlink. This can send weak ranking signals to search engine algorithms.

Best Practices For Pagination Implementation

1. Current pagination testing of your site

Open Inspect Element on the page you are testing. Use the find feature (Ctrl+F) and type “canonical”. You can check the current pagination of your page using this method. You can see in the result rel=”canonical” href=”the url of the current page”. This can help you test the similarity index of all your paginated pages.

2. Create unique content for your paginated pages

As mentioned earlier, Google considers each page unique, and crawls them as such. This means that each of your pages should have unique and valuable content for it to rank on search engine result pages (SERPs). Splitting content across various pages and marking it as a single article can devalue the content or make it seem duplicate. Ensure that each of your paginated pages have unique content that can stand on its own.

3. Use your target keywords smartly

Using keywords in the anchor text that links back to the most valuable pillar pages on your website is a very good SEO practice. Make sure that you use variations of your target keyword across the pages to avoid any instances of keyword cannibalization. You would not want your pages to compete with each other for the same keyword. This can bring your entire website’s rankings down.

4. Arrange your items based on priority

You want your users to know what is the most popular content on your website. In order for that to happen, you must arrange each of your paginated pages in terms of their importance level. The most relevant pages should always be a few links away from the landing or the pillar page. You can even use breadcrumbs to make it easily identifiable to the users.

5. Canonical URLs

Since pagination can weaken the ranking signals of your website, it is important that your link quality is kept in check. Make sure that your link structure is not too complicated. One way to solve this is to put in the canonical tag in the URLs of each page that is paginated, and the landing page they redirect to. Google will mark the canonical page as the most important page, and rank it on SERPs.

6. rel=”prev” And rel=”next” Attributes

rel=“next” and rel=“prev” attributes are no longer supported by Google. This means that each page should have on-page SEO optimization for it to rank, and manage pagination effectively across the website.

7. Improve link structure

Link structure can help crawlers easily identify pages, and mark them out as pages to crawl for. For instance, on a shopping site, the main category :Shirts” can have subcategories. In that case, parameter URLs would look something like these:

https://www.xyz.com.com/shirts

https://www.xyz.com.com/shirts/v-neck-shirts

https://www.xyz.com/shirts/v-neck-shirts/adidas-v-neck-shirt-black

8. Get your facet navigation fixed

If your website offers a filter option to the users to lead them to the most relevant results, you are using facet navigation. These filters, however, can create new URLs for each parameter in the filter by the users. This can create a possibility of infinite crawlable URLs on your website, and can result in the issue of duplicated content. You can fix this issue by using AJAX (Asynchronous JavaScript and XML), which prevents this from happening. You can also identify the parameters on your filters that do not have any SEO value and prevent crawlers from indexing them

Carry Out Pagination in the Right Way

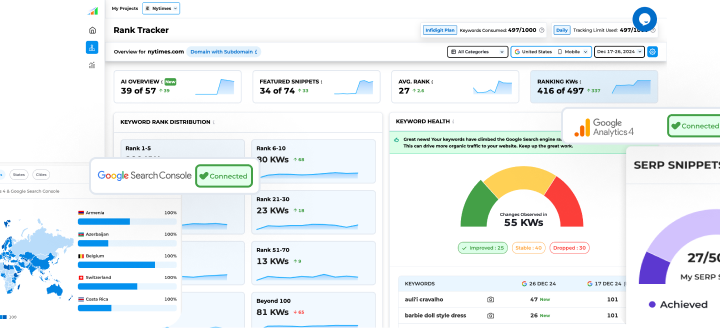

Pagination streamlines the pages on a website so that visitors to the website can easily navigate them and access the information that they are looking for. However, it is also a tricky process that requires expertise from an SEO standpoint. Collaborating with an SEO company like Infidigit can prove to be of major help in carrying this process out seamlessly, without having to worry about your website’s ranking. Infidigit offers many SEO services and identifies what can help your website rank higher on SERPs. Contact us today to learn more.

Is pagination bad for SEO?

While there is some disagreement about the answer to this question, it is a fact that improper pagination harms search engine ranking signals. It degrades reversed and internal URLs, as well as social media shares. Also, if you do not include rel= canonical, pagination may result in duplicate content.

If you allow search engine crawlers to navigate through pagination pages, your crawling budget will be impacted. Next, it has a negative impact on scan depth which affects StaticRank Bing, Google PageRank, and other sites that decide link popularity. Another reason to avoid pagination is that it might result in thin content while downgrading your site performance.

Pagination vs Infinite Scroll

Pagination works great if visitors to your site are looking for specific content on your website. However, if your site visitors aren’t sure what they’re looking for and are aimlessly scrolling or navigating through your site, the infinite scroll will help.

The pagination strength is visible on the e-commerce site. When people visit an e-commerce site, they want to stay on a specific product page. It helps them to understand the product better by going over the product description and reading reviews. In contrast, infinite scrolling is best suited for social media sites. People enjoy scrolling aimlessly on such sites while seeing a variety of posts.

How does Google handle pagination?

Google treats paginated pages in the same way that it treats other Web pages. The multiple pages of broken content are not indexed as a single piece of content. It means that if a product has multiple landing pages, each of them will be indexed separately rather than as a single category landing page.

Popular Searches

How useful was this post?

0 / 5. 0