Let’s Connect & Accelerate Your Organic Growth

- Your data is properly secured encrypted by SSL

1. XML Sitemap Missing

Extensible markup language (XML) is a collection of URLs. It directs the search engine crawler to your website’s most important pages. You are mistaken if you believe that a website cannot rank if it lacks an XML sitemap. However, you can’t dispute that a lack of XML makes it extremely difficult to rank a website on a search engine. The crawler uses XML as a reference for collecting your website’s URL. It also aids search engine optimisation (SEO) efforts in figuring out the errors on pages.

The major issues related to XML sitemap are: (4XX) not found, (5XX) error, no index URL, disallowed URL, and canonicalised URLs. Some other issues of XML sitemap are:

- (3XX) redirect URL

- (403) forbidden URL

- Timeout URL

- Presence of URL in several XML sitemaps

You can fix this issue using a tool that generates an XML sitemap.

Unleash your website's potential by harnessing Infidigit's 400+ SEO audit to achieve peak site health & dominance on Google organic search.

Looking for an extensive

SEO Audit for your website?

Unleash your website's potential by harnessing Infidigit's 400+ SEO audit to achieve peak site health & dominance on Google organic search.

2. Problems in Robots.txt

Robots.txt is a crucial aspect of technical SEO. It informs search engine crawlers of the URL on your website that they can access. It helps SEO by blocking various Web pages on your site that you don’t want to show up in search results. For instance, if you don’t want your site’s career page to appear in search results, Robots.txt can help. It also prevents overloading of your site due to multiple access requests.

The most common issues related to Robots.txt are:

- Access to sites that are still under development

- Absence of Robots.txt from the root directory

- Incorrect use of wildcards like the asterisk and the dollar symbol

3. No HTTPS Security

Hypertext Transfer Protocol Secure (HTTPS), enables secure data transfer between the server and the client. It suggests that the site is authentic and the data transmission on the site is in encrypted form. If the site is not secure, hackers might attempt to steal sensitive information. There is also the possibility of data tampering during transmission. Google also considers ‘HTTP‘ sites insecure.Due to this, the ranks of several websites have slipped.

You can fix this technical SEO problem by following these steps.

- Attempt to convert your HTTP site to HTTPS.

- Look for a secure socket layer (SSL) certificate. It is available from the certificate authority.

- Install the certificate.

That is it; the HTTP issue is resolved.

4. Indexation Issues

Indexing issues in technical SEO prevent your site from ranking high on the internet. Search engine crawlers find it difficult to move through pages that are not indexed. As a result of this, Google and other search engines won’t find your page. Indexation problems can arise for a variety of causes, including:

- 404 errors

- Meta tags on pages with NOINDEX and NOFOLLOW source codes

- Outdated sitemap

- Duplicate content

You can resolve the indexing issues through one of these methods:

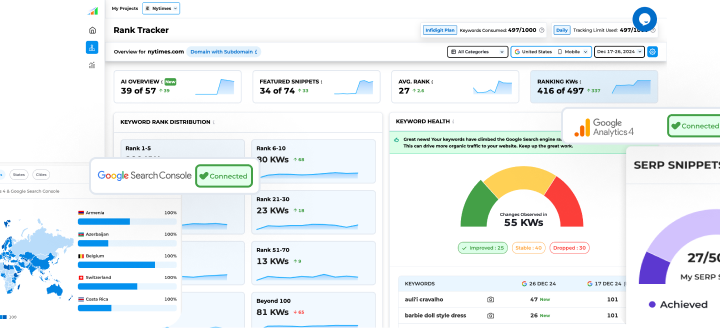

- Take the help of Google Search Console. It will display the non-indexed URLs immediately after you make a URL inspection request.

- Check for XML sitemap optimisation.

5. Meta Robot Tags NOINDEX

NOINDEX has far more severe consequences for your technical SEO approach than Robot.txt. This problem could remove all pages from Google’s indexation. The NOINDEX configuration comes into play when your site is in the development phase. The chances of making mistakes are significant during this phase. It is because keeping track of multiple Web pages which are about to go live or which are scheduled to go live is difficult.

Here is how you can identify and resolve the NOINDEX issue:

- Always review the source code of your site before making it live.

- Conduct regular audits of your site if it is regularly updated and improvised.

- Use one of the many SEO tools to resolve this issue.

6. URL Canonicalisation

Sometimes you may end up having pages that are duplicates or near-duplicates of each other. It is also possible that there may be a case when a single page can be accessed by multiple URLs.

For example:

https://letsforexamplesay.com/black-shoes/black-and-red-shoes/

https://letsforexamplesay.com/red-shoes/black-and-red-shoes/

In such cases, Google identifies these pages as duplicate versions of the same page. One URL will be identified as a canonical URL by Google for crawling. The rest of the URLs will be crawled lesser than the one identified by Google.

You can fix this, you can select a preferred URL and this is where Google will send traffic to. In this case this would then become the canonical URL. Alternatively you can also fix this issue by implementing 301 redirects sitewide, targeting duplicate content. You may also use canonical tags on all of your web pages. Make sure you are following the best practice while adding such tags.

7. Page Speed Issues

Slow pages or websites not only hurt SEO but also trigger user displeasure. It’s inconvenient when a page takes several minutes to load. These are some factors that contribute to page speed issues.

- Your Web page has several unoptimised images. You can fix this issue by opting for the jpeg format instead of GIF or PNG formats.

- Another factor is the use of plenty of flash content. You can fix this by using HTML5 replacements.

- While display advertisements can help you earn money, they can also slow down page load.

- You are undoubtedly slowing down page speed if you don’t use the caching approach in your SEO strategy. Try fixing this problem by caching irrelevant database queries, pictures, and HTTP requests.

8. 301 and 302 Redirection

A 301 redirect is a response status code that redirects the search engine to a new page from the one that has been removed permanently. It is used when multiple URLs can be used to visit your site. It also comes in handy when you migrate to a new domain and want older search engines to point to your new site. A 302 serves the same purpose as a 301, but only for pages that have been temporarily removed. Both of these redirects, if used incorrectly, lower your link equity and hence your rankings.

You can fix this issue by removing such pages from your sitemap. You may also look for fixing redirect chains and redirect loops.

9. Rel=canonical

A rel=canonical tag informs search engines which URL is the master copy of a page. It overcomes challenges that the Google search bot may encounter when distinguishing between the original and duplicate content that exists on several URLs. Web pages that have rel=canonical issues find it difficult to rank on Google.

You can quickly resolve this problem by inspecting the source code on the spot. The rel=canonical fixing approach differs depending on the content format and web platform. If you are having trouble repairing it on your own, consider hiring a web developer.

10. Images with Missing ALT Tags

Images enhance user experience. They also help decrease bounce rates and increase traffic opportunities. The alt tags, or alternative texts, define and describe the image on your Web page. It assists in the indexation of your page by informing the bot about the image on the page. It also helps if the browser fails to render the page correctly and the image does not load. Here, the alt text helps viewers understand what the image is all about. An image-oriented page without alt tags conveys to search engines that the page isn’t user-friendly, and can cause issues for screen readers. Regular site audits are the only way to detect and address this issue.

11. Internal Link Structure

Internal links are hyperlinks that connect one page of your website to another. It allows both search engines and your visitors to browse through your site’s pages in search of the content they are looking for. Internal links assist search engines in discovering new pages on your site, which they then index and display in search engine result pages (SERPs). However, any errors in the link structure directly impact the visibility of your page. Your ranking will suffer if the page has broken internal links, orphan pages, or links to irrelevant pages.

You can detect this issue by conducting timely site audits. Some other steps include deep linking of important pages, adding links to orphan pages, and repairing broken links.

12. Meta Refresh Tag

The meta refresh tag is a technique for redirecting Web users from an old page to a new one. This process is critical in attracting traffic to websites. It also reloads the current page that you are viewing. However, over time, SEO professionals no longer recommend this strategy. The reason? Constant updating of the page results in terrible user experience. Another reason is redirection. The search engine will only index the second page, not the page from which you were redirected. Moreover, if you are using an older browser and a redirect occurs every 2-3 seconds, you will be unable to utilise the Back button.

In the context of redirect, you can fix this problem using a 301 server redirect.

13. Not Using Structured Data Markup

Structured data markup is critical for SEO. It helps search engines understand your website’s context, such as, which product the specific page is discussing, what information your page wants to convey to the users, and so on. It also has an impact on the content snippet. If the structured data markup on your website is poor or missing, your page will hardly figure in the search results. It leads to poor page traffic, low clicks, and a drop in rankings.

You can resolve this issue using structured data tools for testing, filling out the missing fields, and manually fixing pages. You may also look into validating the updated markup.

14. Poor Mobile Experience

Another significant factor that hurts your technical SEO is a poor mobile experience. Visitors rarely stick with sites where content or page structure is incompatible with different devices, thereby increasing the bounce rate. Furthermore, many companies use separate URLs for mobile and desktop users. However, this does not help SEO. The split has an impact on your link equity and diverts traffic without notifying users. Google also suspects such sites and downgrades their rankings. To make your site mobile friendly, use these measures:

- Opt for mobile-friendly templates.

- Avoid using flash content in large volumes.

- Use images in the JPEG format and minimise using GIF or PNG formats.

- Always use readable fonts.

15. Low Text-to-HTML Ratio

The text-to-HTML ratio is low when the content on a Web page is relatively thin in relation to the code on that page. Redundant code impacts the page speed, making the website slower to load. Such pages are difficult to rank in a search engine. The perfect text-to-HTML ratio is between 25% and 70%. Google uses this metric to evaluate the relevance of the page. Furthermore, if there are too many unnecessary tags, the search engine crawler has a tough time moving through the website. For such pages, indexing is also problematic.

You can resolve this issue by placing Javascript and CSS in separate files. You must also validate the HTML code and delete any unnecessary HTML tags from your page.

16. Duplicate Content

Duplicate content refers to situations in which identical content appears across several URLs. It makes it difficult for search engine crawlers to decide which URLs to display in search results. Duplicate content can really hurt your ranking. Even if your content is informative and has the right keywords, it will fall short. These factors contribute to duplicate content in SEO.

- Content cloning

- Misunderstanding the URL concept

- Session IDs

- Comment pagination

- Content syndication

- Content scraping

You can fix these issues using the following methods.

- Avoid copy-paste content

- Redirect such content to the canonical URL

- Integrate canonical link to the page with clone issue

- Add an HTML link to the canonical page

17. Broken links

Broken links lead visitors to a Web page that has been removed or which no longer exists. The main causes for this issue are page name, archived content, and website closure. Such links have various disadvantages. For example, it deteriorates conversion rates, increases bounce rate and downgrades your position on search engines.

You can fix this issue using these methods:

- Instead of deleting an out-of-date page from your website, consider updating the content on it. In case of page deletion, the user looking for it will see an error page.

- In case you opt for a page delete, use a 301 redirection. It will lead users to the new page rather than showing an error page.

18. Incorrect Language Declaration

If your website has a global audience, or if it is targeting the global market, language declaration becomes crucial. It aids search engines in language detection, especially when it comes to text-to-speech conversion. Language declaration ensures that translators understand the content and read it properly in a native language. It also assists in local SEO. An erroneous hreflang will not serve the appropriate regionalised content which is ideal for a specific geographical location.

You can fix this technical SEO issue by conducting a manual inspection of page content. If you detect any flaws, correct them immediately on the page itself. You can also make changes to the script generating language tag.

19. Non-Working Contact Forms

According to data, out of 1.5 million visitors, only 49% fill out the form after seeing it. And out of this 49%, 16% complete it. A broken contact form is a flaw in your SEO campaign. It will prevent conversions.

A contact form assures poor user experience thanks to the countless required fields, a non-functioning Submit button, and so on. You can resolve these issues by implementing these pointers:

- Avoid using CAPTCHA

- Keep your form brief and limit the number of necessary fields to five.

- Enable the auto-fill feature in the form

- Use the Google autocomplete plugin

- Ensure that the colour, text size and positioning do not look odd.

- Go for catchy call to action (CTA) buttons or text.

Conclusion

SEO is a vast field. It is vital to look into all the parameters discussed here to boost your site’s performance and improve its rankings. Make sure that you conduct site audits regularly. Some other basic things that you can do on your own without requiring an SEO audit service are updating content regularly, optimising images, and updating trending keywords, among others.

Popular Searches

How useful was this post?

5 / 5. 1